Addicted: An Industry Matures by Ted McCarthy.

From the post:

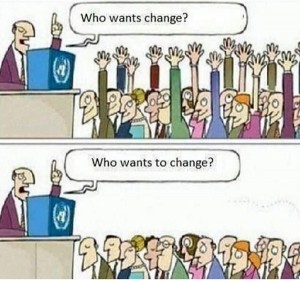

Perhaps nothing better defines our current age than to say it is one of rapid technological change. Technological improvements will continue to provide more to individuals and society, but also to demand more: demand (and leak) more of our data, more time, more attention and more anxieties. While an increasingly vocal minority have begun to rail against certain of these demands, through calls to pull our heads away from our screens and for corporations and governments to stop mining user data, a great many in the tech industry see no reason to change course. User data and time are requisite in the new business ecosystem of the Internet; they are the fuel that feeds the furnace.

Among those advocating for more fuel is Nir Eyal and his recent work, Hooked: How to Build Habit-Forming Products. The book — and its accompanying talk — has attracted a great deal of attention here in the Bay Area, and it’s been overwhelmingly positive. Eyal outlines steps that readers — primarily technology designers and product managers — can follow to make ‘habit-forming products.’ Follow his prescribed steps, and rampant entrepreneurial success may soon be yours.

Since first seeing Eyal speak at Yelp’s San Francisco headquarters last fall, I’ve heard three different clients in as many industries refer to his ideas as “amazing,” and some have hosted reading groups to discuss them. His book has launched to Amazon’s #1 bestseller spot in Product Management, and hovers near the same in Industrial & Product Design and Applied Psychology. It is poised to crack into the top 1000 sellers across the entire site, and reviewers have offered zealous praise: Eric Ries, a Very Important tech Person indeed, has declared the book “A must read for everyone who cares about driving customer engagement.”

And yet, no one offering these reviews has pointed what should be obvious: that Eyal’s model for “hooking” users is nearly identical to that used by casinos to “hook” their own; that such a model engenders behavioral addictions in users that can be incredibly difficult to overcome. Casinos may take our money, but these products can devour our time; and while we’re all very aware of what the casino owners are up to, technology product development thus far has managed to maintain an air of innocence.

While it may be tempting to dismiss a book seemingly written only for, and read only by, a small niche of $12 cold pressed juice-drinking, hoodie and flip flop-wearing techies out on the west coast, one should consider the ways in which those techies are increasingly creating the worlds we all inhabit. Technology products are increasingly determining the news we read, the letters we send, the lovers we meet and the food we eat — and their designers are reading this book, and taking note. I should know: I’m one of them.

…

I start with Ted McCarthy’s introduction because I found out about Hooked: How to Build Habit-Forming Products by Nir Eyal. It certainly sounded like a book that I must read!

I was hoping to find reviews sans moral hand-wringing but even Hooked: How To Make Habit-Forming Products, And When To Stop Flapping by Wing Kosner gets in on the moral concern act:

In the sixth chapter of the book, Eyal discusses these manipulations, but I think he skirts around the morality issues as well as the economics that make companies overlook them. The Candy Crush Saga game is a good example of how his formulation fails to capture all the moral nuance of the problem. According to his Manipulation Matrix, King, the maker of Candy Crush Saga, is an Entertainer because although their product does not (materially) improve the user’s life, the makers of the game would happily use it themselves. So, really, how bad can it be?

Consider this: Candy Crush is a very habit-forming time-waster for the majority of its users, but a soul-destroying addiction for a distinct minority (perhaps larger, however, than the 1% Eyal refers to as a rule of thumb for user addiction.) The makers of the game may be immune to the game’s addictive potential, so their use of it doesn’t necessarily constitute a guarantee of innocuousness. But here’s the economic aspect: because consumers are unwilling to pay for casual games, the makers of these games must construct manipulative habits that make players seek rewards that are most easily attained through in-app purchases. For “normal” players, these payments may just be the way that they pay to play the game instead of a flat rate up-front or a subscription, and there is nothing morally wrong with getting paid for your product (obviously!) But for “addicted” players these payments may be completely out of scale with any estimate of the value of a casual game experience. King reportedly makes almost $1 million A DAY from Candy Crush, all from in app purchases. My guess is that there is a long tail going on with a relative few players being responsible for a disproportionate share of that revenue.

…

This is in Forbes.

I don’t read Forbes for moral advice.  I don’t consult technologists either. For moral advice, consult your local rabbi, priest or iman.

I don’t consult technologists either. For moral advice, consult your local rabbi, priest or iman.

Here is an annotated introduction to Hooked, if you want to get a taste of what awaits before ordering the book. If you visit the book’s website, you will be offered a free Hooked workbook. And you can follow Nir Eyal on Twitter: @nireyal. Whatever else can be said about Nir Eyal, he is a persistent marketeer!)

Before you become overly concerned about the moral impact of Hooked, recall that legions of marketeers have labored for generations to produce truly addictive products, some with “added ingredients” and others, more recently, not. Creating additive products isn’t as easy as “read the book” and the rest of us will start wearing bras on our heads. (Apologies to Scott Adams and especially to Dogbert.)

Implying that you can make all of us into addictive product mavens, however, is a marketing hook that few of us can resist.

Enjoy!