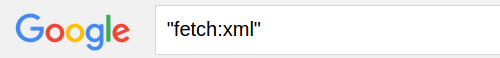

I ask about Honestsociety.com because when I search on Google with the string:

honest society member

I get 82,100,000 “hits” and the first page is entirely, honor society stuff.

No, “did you mean,” or “displaying results for…”, etc.

Not a one.

Top of the second page of results did have a webpage that mentions honestsociety.com, but not their home site.

I can’t recall seeing an Honestsociety ad with Google and thought perhaps one of you might.

Lacking such ads, my seat of the pants explanation for “honest society member” returning the non-responsive “honor society” listing isn’t very generous.

What anomalies have you observed in Google (or other) search results?

What searches would you use to test ranking in search results by advertiser with Google versus non-advertiser with Google?

Rigging Searches

For my part, it isn’t a question of whether search results are rigged or not, but rather are they rigged the way I or my client prefers?

Or to say it in a positive way: All searches are rigged. If you think otherwise, you haven’t thought very deeply about the problem.

Take library searches for example. Do you think they are “fair” in some sense of the word?

Hmmm, would you agree that the collection practices of a library will give a user an impression of the literature on a subject?

So the search itself isn’t “rigged,” but the data underlying the results certainly influences the outcome.

If you let me pick the data, I can guarantee whatever search result you want to present. Ditto for the search algorithms.

The best we can do is make our choices with regard to the data and algorithms explicit, so that others accept our “rigged” data or choose to “rig” it differently.