Visualizing Nonlinear Narratives with Story Curves by Nam Wook Kim, et al.

From the webpage:

A nonlinear narrative is a storytelling device that portrays events of a story out of chronological order, e.g., in reverse order or going back and forth between past and future events. Story curves visualize the nonlinear narrative of a movie by showing the order in which events are told in the movie and comparing them to their actual chronological order, resulting in possibly meandering visual patterns in the curve. We also developed Story Explorer, an interactive tool that visualizes a story curve together with complementary information such as characters and settings. Story Explorer further provides a script curation interface that allows users to specify the chronological order of events in movies. We used Story Explorer to analyze 10 popular nonlinear movies and describe the spectrum of narrative patterns that we discovered, including some novel patterns not previously described in the literature. (emphasis in original)

Applied here to movie scripts, an innovative visualization that has much broader application.

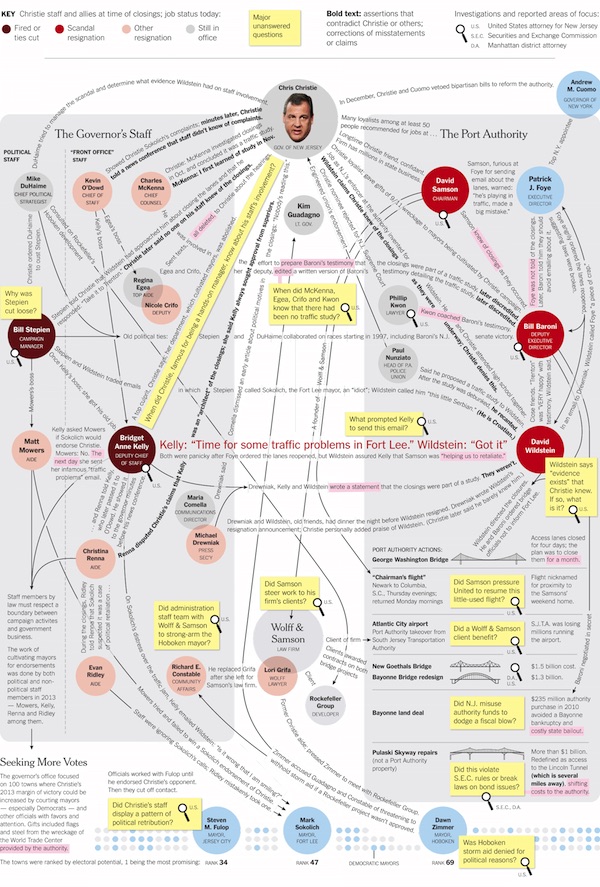

Investigations by journalists or police officers don’t develop in linear fashion. There are leaps forwards and backwards in time as a narrative is assembled. The resulting “linear” narrative bears little resemblance to its construction.

Imagine being able to visualize and compare the nonlinear narratives of multiple witnesses to a series of events. Use of the same nonlinear sequence isn’t proof they are lying but should suggest at least coordination of their testimony.

Linear markup systems struggle with nonlinear narratives and there may be value here for at least visualizing those pinch points.

Sadly the code for Story Curve and Story Explorer is temporarily unavailable as of 5 October 2017. Hoping that gets sorted out in the near future.