Open data quality – the next shift in open data? by Danny Lämmerhirt and Mor Rubinstein.

From the post:

Some years ago, open data was heralded to unlock information to the public that would otherwise remain closed. In the pre-digital age, information was locked away, and an array of mechanisms was necessary to bridge the knowledge gap between institutions and people. So when the open data movement demanded “Openness By Default”, many data publishers followed the call by releasing vast amounts of data in its existing form to bridge that gap.

To date, it seems that opening this data has not reduced but rather shifted and multiplied the barriers to the use of data, as Open Knowledge International’s research around the Global Open Data Index (GODI) 2016/17 shows. Together with data experts and a network of volunteers, our team searched, accessed, and verified more than 1400 government datasets around the world.

We found that data is often stored in many different places on the web, sometimes split across documents, or hidden many pages deep on a website. Often data comes in various access modalities. It can be presented in various forms and file formats, sometimes using uncommon signs or codes that are in the worst case only understandable to their producer.

As the Open Data Handbook states, these emerging open data infrastructures resemble the myth of the ‘Tower of Babel’: more information is produced, but it is encoded in different languages and forms, preventing data publishers and their publics from communicating with one another. What makes data usable under these circumstances? How can we close the information chain loop? The short answer: by providing ‘good quality’ open data.

…

Congratulations to Open Knowledge International on re-discovering the ‘Tower of Babel’ problem that prevents easy re-use of data.

Contrary to Lämmerhirt and Rubinstein’s claim, barriers have not “…shifted and multiplied….” More accurate to say Lämmerhirt and Rubinstein have experienced what so many other researchers have found for decades:

…

We found that data is often stored in many different places on the web, sometimes split across documents, or hidden many pages deep on a website. Often data comes in various access modalities. It can be presented in various forms and file formats, sometimes using uncommon signs or codes that are in the worst case only understandable to their producer.

…

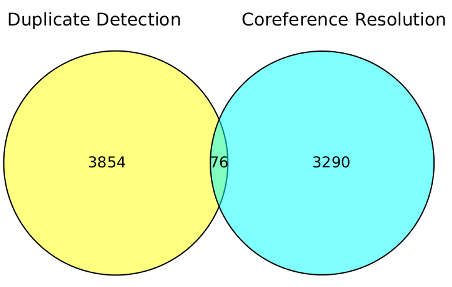

The record linkage community, think medical epidemiology, has been working on aspects of this problem since the 1950’s at least (under that name). It has a rich and deep history, focused in part on mapping diverse data sets to a common representation and then performing analysis upon the resulting set.

A common omission in record linkage is to capture in discoverable format, the basis for mapping of the diverse records to a common format. That is subjects represented by “…uncommon signs or codes that are in the worst case only understandable to their producer,” that Lämmerhirt and Rubinstein complain of, although signs and codes need not be “uncommon” to be misunderstood by others.

To their credit, unlike RDF and the topic maps default, record linkage has long recognized that identification consists of multiple parts and not single strings.

Topic maps, at least at their inception, was unaware of record linkage and the vast body of research done under that moniker. Topic maps were bitten by the very problem they were seeking to solve. That being a subject, could be identified many different ways and information discovered by others about that subject, could be nearby but undiscoverable/unknown.

Rather than building on the experience with record linkage, topic maps, at least in the XML version, defaulted to relying on URLs to identify the location of subjects (resources) and/of identifying subjects (identifiers). Avoiding the Philosophy 101 mistakes of RDF, confusing locators and identifiers + refusing to correct the confusion, wasn’t enough for topic maps to become widespread. One suspects in part because topic maps were premised on creating more identifiers for subjects which already had them.

Imagine that your company has 1,000 employees and in order to use a new system, say topic maps, everyone must get a new name. Can’t use the old one. Do you see a problem? Now multiple that by every subject anyone in your company wants to talk about. We won’t run out of identifiers but your staff will certainly run out of patience.

Robust solutions to the open data ‘Tower of Babel’ issue will include the use of multi-part identifications extant in data stores, dynamic creation of multi-part identifications when necessary (note, no change to existing data store), discoverable documentation of multi-part identifications and their mappings, where syntax and data models are up to the user of data.

That sounds like a job for XQuery to me.

You?