Sam Hunting and I are working on a presentation for Balisage that involves a subset of the Enron dataset focused on spreadsheets.

You will have to attend Balisage to see the floor show but I will be posting notes about our preparations for the demo under the category Enron and/or Spreadsheets.

Origin of the Enron dataset on Spreadsheets

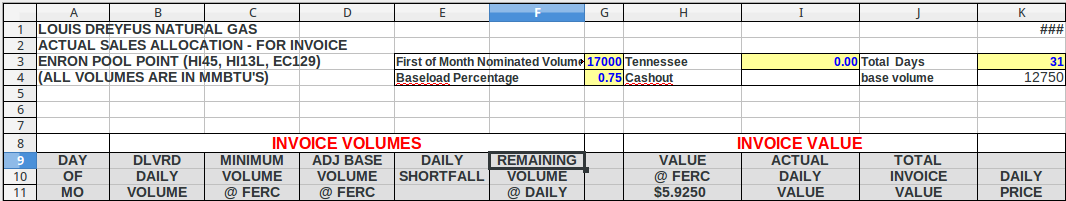

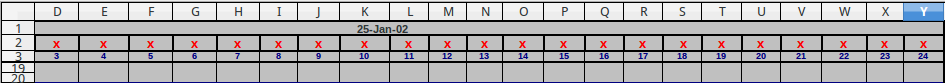

First things first, the subset of the Enron dataset focused on spreadsheets was announced by Felienne Hermans in A modern day Pompeii: Spreadsheets at Enron.

The data set: Hermans, Felienne (2014): Enron Spreadsheets and Emails. figshare. http://dx.doi.org/10.6084/m9.figshare.1221767

Feilienne has numerous presentations and publications on spreadsheets and issues with spreadsheets.

I have always thought of spreadsheets as duller versions of tables.

Felienne, on the other hand, has found intrigue, fraud, error, misunderstanding, opacity, and the usual chicanery of modern business practice.

Whether you want to “understand” a spreadsheet depends on whether you need plausible deniability or if you are trying to detect efforts at plausible deniability. Auditors for example.

Felienne’s Enron spreadsheet data set is a great starting point for investigating spreadsheets and their issues.

Unpacking the Archives with 7z

The email archive comes in thirteen separate files, eml.7z.001 – eml.7z.013.

At first I tried to use 7z to assemble the archive, decompress it and grep the results without writing it out. No go.

On a subsequent attempt, just unpacking the multi-part file, a message appeared announcing a name conflict and asking what to do with the conflict.

IMPORTANT POINT: Thinking I don’t want to lose any data, I foolishly said to rename files to avoid naming conflicts.

You are probably laughing at this point because you can see where this is going.

The command I used to first extract the files reads: 7z e eml.7z.001 (remembering that in the case of name conflicts I said to rename the conflicting file).

But if you use 7z e, all the files are written to a single directory. Which of course means for every single file write, it has to check for conflicting file names. Opps!

After more than twenty-four (24) hours of ever slowing output (# of files was at 528,000, approximately), I killed the process and took another path.

I used 7z x eml.7z001 (correct command), which restores all of the original directories and therefore there are no file name conflicts. File writing I/O jumped up to 20MB/sec+, etc.

Still took seventy-eight (78) minutes to extract but there were other heavy processes going on at the same time.

Like deleting the 528K+ files in the original unpacked directory. Did you know that rm has an argument limit? I’m sure you won’t encounter it often but it can be a real pain when you do. I was deleting all the now unwanted files from the first run when I encountered it.

A shell limitation according to: Argument List Too Long. A 128K limit to give you an idea of the number of files you need to encounter before hitting this issue.

The Lesson

Unpack the Enron email archive with: 7z x eml.7z.001.

Tomorrow I will be posting about using Unix shell tools to explore the email data.

PS: Register for Balisage today!