From the post:

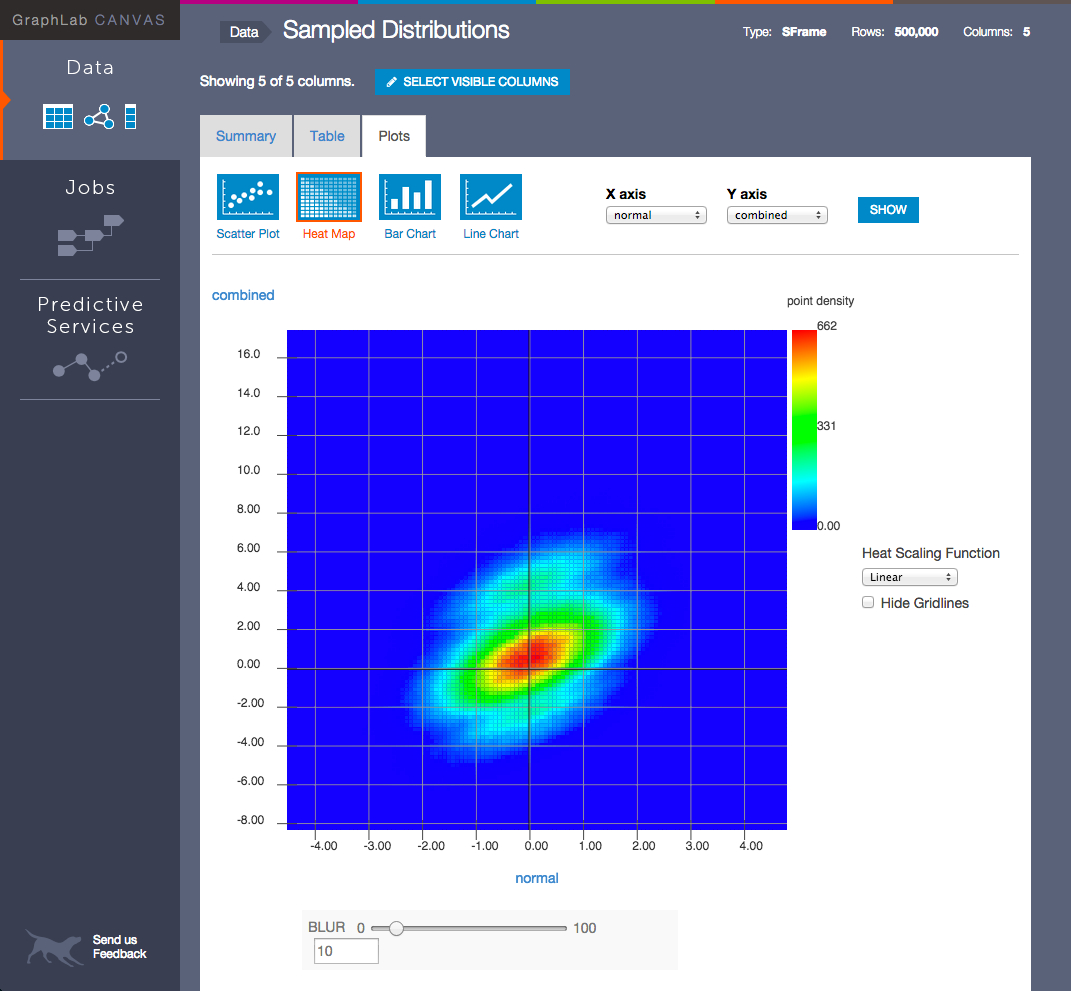

Today at Strata + HadoopWorld San Jose, Dato (formerly known as GraphLab) announced new updates to its machine learning platform, GraphLab Create, that allow data science teams to wrangle terabytes of data on their laptops at interactive speeds so that they can build intelligent applications faster. With Dato, users leverage machine learning to build prototypes, tune them, deploy in production and even offer them as a predictive service, all in minutes. These are the intelligent applications that provide predictions for a myriad of use cases including recommenders, sentiment analysis, fraud detection, churn prediction and ad targeting.

Continuing with its commitment to the Open Source community, Dato is also announcing the Open Source release of its core engine, including the out of core machine learning(ML)-optimized SFrame and SGraph data structures which make ML tasks blazing fast. Commercial and non-commercial versions of the full GraphLab Create platform are available for download at www.dato.com/download.

…

New features available in the GraphLab Create platform include:

- Predictive Service Deployment Enhancements:

enables easy integrations of Dato predictive services with applications regardless of development environment and allows administrators to view information about deployed models and statistics on requests and latency on a per predictive object basis.- Data Science Task Automation:

a new Data Matching Toolkit allows for automatic tagging of data from a reference dataset and deduplication of lists automatically. In addition, the new Feature Engineering pipeline makes it easy to chain together multiple feature transformations–a vast simplification for the data engineering stage.- Open Source Version of GraphLab Create:

Dato is offering an open-source release of GraphLab Create’s core code. Included in this version is the source for the SFrame and SGraph, along with many machine learning models, such as triangle counting, pagerank and more. Using this code, it is easy to build a new machine learning toolkit or a connector from the Dato SFrame to a data store. The source code can be found on

Dato’s GitHub page.- New Pricing and Packaging Options:

updated pricing and packaging include a non-commercial, free offering with the same features as the GraphLab Create commercial version. The free version allows data science enthusiasts to interact with and prototype on a leading machine learning platform. Also available is a new 30-day, no obligation evaluation license of the full-feature, commercial version of Dato’s product line.

Excellent news!

Now if we just had secure hardware to run it on.

On the other hand, it is open source so you can verify there are no backdoors in the software. That is a step in the right direction for security.