F1000Research launches rapid, open, publishing channel to help scientists tackle Zika

From the post:

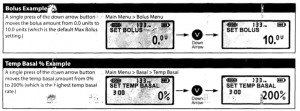

ZAO provides a platform for scientists and clinicians to publish their findings and source data on Zika and its mosquito vectors within days of submission, so that research, medical and government personnel can keep abreast of the rapidly evolving outbreak.

The channel provides diamond-access: it is free to access and articles are published free of charge. It also accepts articles on other arboviruses such as Dengue and Yellow Fever.

The need for the channel is clearly evidenced by a recent report on the global response to the Ebola virus by the Harvard-LSHTM (London School of Hygiene & Tropical Medicine) Independent Panel.

The report listed ‘Research: production and sharing of data, knowledge, and technology’ among its 10 recommendations, saying: “Rapid knowledge production and dissemination are essential for outbreak prevention and response, but reliable systems for sharing epidemiological, genomic, and clinical data were not established during the Ebola outbreak.”

Dr Megan Coffee, an infectious disease clinician at the International Rescue Committee in New York, said: “What’s published six months, or maybe a year or two later, won’t help you – or your patients – now. If you’re working on an outbreak, as a clinician, you want to know what you can know – now. It won’t be perfect, but working in an information void is even worse. So, having a way to get information and address new questions rapidly is key to responding to novel diseases.”

Dr. Coffee is also a co-author of an article published in the channel today, calling for rapid mobilisation and adoption of open practices in an important strand of the Zika response: drug discovery – http://f1000research.com/articles/5-150/v1.

Sean Ekins, of Collaborative Drug Discovery, and lead author of the article, which is titled ‘Open drug discovery for the Zika virus’, said: “We think that we would see rapid progress if there was some call for an open effort to develop drugs for Zika. This would motivate members of the scientific community to rally around, and centralise open resources and ideas.”

Another co-author, of the article, Lucio Freitas-Junior of the Brazilian Biosciences National Laboratory, added: “It is important to have research groups working together and sharing data, so that scarce resources are not wasted in duplication. This should always be the case for neglected diseases research, and even more so in the case of Zika.”

Rebecca Lawrence, Managing Director, F1000, said: “One of the key conclusions of the recent Harvard-LSHTM report into the global response to Ebola was that rapid, open data sharing is essential in disease outbreaks of this kind and sadly it did not happen in the case of Ebola.

“As the world faces its next health crisis in the form of the Zika virus, F1000Research has acted swiftly to create a free, dedicated channel in which scientists from across the globe can share new research and clinical data, quickly and openly. We believe that it will play a valuable role in helping to tackle this health crisis.”

###

For more information:

Andrew Baud, Tala (on behalf of F1000), +44 (0) 20 3397 3383 or +44 (0) 7775 715775

Excellent news for researchers but a direct link to the new channel would have been helpful as well: Zika & Arbovirus Outbreaks (ZAO).

See this post: The Zika & Arbovirus Outbreaks channel on F1000Research by Thomas Ingraham.

News organizations should note that as of today, 11 February 2016, ZAO offers 9 articles, 16 posters and 1 set of slides. Those numbers are likely to increase rapidly.

Oh, did I mention the ZAO channel is free?

Unlike some journals, payment, prestige, privilege, are not pre-requisites for publication.

Useful research on Zika & Arboviruses is the only requirement.

I know, sounds like a dangerous precedent but defeating a disease like Zika will require taking risks.