Sci-Hub ‘Pirate Bay For Science’ Security Certs Revoked by Comodo by Andy.

From the post:

Sci-Hub, often known as ‘The Pirate Bay for Science’, has lost control of several security certificates after they were revoked by Comodo CA, the world’s largest certification authority. Comodo CA informs TorrentFreak that the company responded to a court order which compelled it to revoke four certificates previously issued to the site.

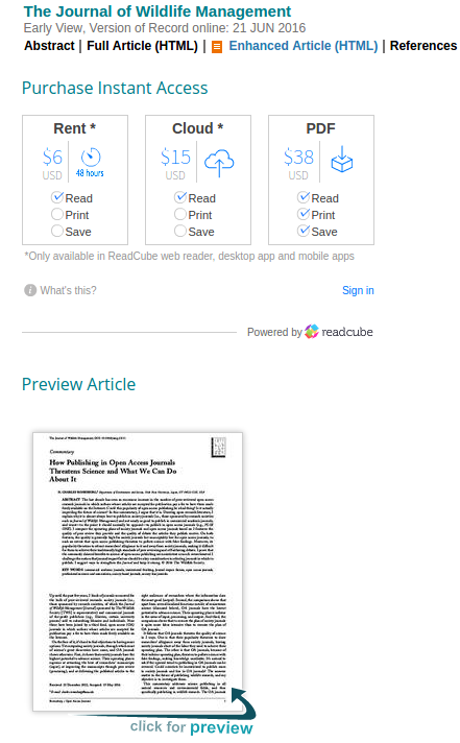

Sci-Hub is often referred to as the “Pirate Bay of Science”. Like its namesake, it offers masses of unlicensed content for free, mostly against the wishes of copyright holders.

While The Pirate Bay will index almost anything, Sci-Hub is dedicated to distributing tens of millions of academic papers and articles, something which has turned itself into a target for publishing giants like Elsevier.

Sci-Hub and its Kazakhstan-born founder Alexandra Elbakyan have been under sustained attack for several years but more recently have been fending off an unprecedented barrage of legal action initiated by the American Chemical Society (ACS), a leading source of academic publications in the field of chemistry.

…

While ACS has certainly caused problems for Sci-Hub, the platform is extremely resilient and remains online.

The domains https://sci-hub.is and https://sci-hub.nu are fully operational with certificates issued by Let’s Encrypt, a free and open certificate authority supported by the likes of Mozilla, EFF, Chrome, Private Internet Access, and other prominent tech companies.

It’s unclear whether these certificates will be targeted in the future but Sci-Hub doesn’t appear to be in the mood to back down.

There are any number of obvious ways you can assist Sci-Hub. Others you will discover in conversations with your friends and other Sci-Hub supporters.

Go carefully.