Parallel Graph Partitioning for Complex Networks by Henning Meyerhenke, Peter Sanders, and, Christian Schulz.

Abstract:

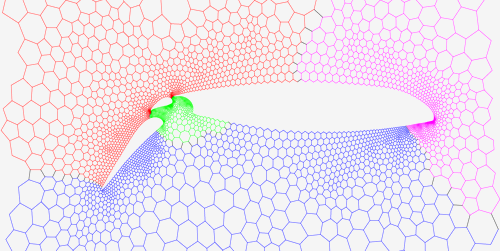

Processing large complex networks like social networks or web graphs has recently attracted considerable interest. In order to do this in parallel, we need to partition them into pieces of about equal size. Unfortunately, previous parallel graph partitioners originally developed for more regular mesh-like networks do not work well for these networks. This paper addresses this problem by parallelizing and adapting the label propagation technique originally developed for graph clustering. By introducing size constraints, label propagation becomes applicable for both the coarsening and the refinement phase of multilevel graph partitioning. We obtain very high quality by applying a highly parallel evolutionary algorithm to the coarsened graph. The resulting system is both more scalable and achieves higher quality than state-of-the-art systems like ParMetis or PT-Scotch. For large complex networks the performance differences are very big. For example, our algorithm can partition a web graph with 3.3 billion edges in less than sixteen seconds using 512 cores of a high performance cluster while producing a high quality partition — none of the competing systems can handle this graph on our system.

Clustering in this article is defined by a node’s “neighborhood,” I am curious if defining a “neighborhood” based on multi-part (hierarchical?) identifiers might enable parallel processing of merging conditions?

While looking for resources on graph contraction, I encountered a series of lectures by Kanat Tangwongsan from: Parallel and Sequential Data Structures and Algorithms, 15-210 (Spring 2012) (link to the course schedule with numerous resources):

Lecture 17 — Graph Contraction I: Tree Contraction

Lecture 18 — Graph Contraction II: Connectivity and MSTs

Lecture 19 — Graph Contraction III: Parallel MST and MIS

Enjoy!