Political Discourse on Social Media: Echo Chambers, Gatekeepers, and the Price of Bipartisanship by Kiran Garimella, Gianmarco De Francisci Morales, Aristides Gionis, Michael Mathioudakis.

Abstract:

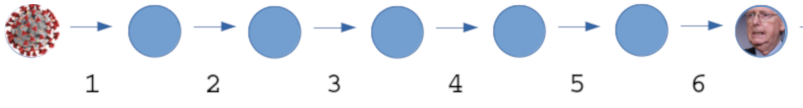

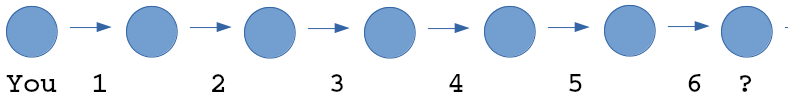

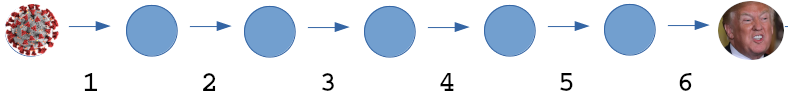

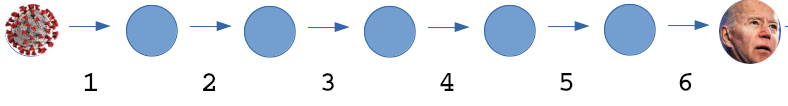

Echo chambers, i.e., situations where one is exposed only to opinions that agree with their own, are an increasing concern for the political discourse in many democratic countries. This paper studies the phenomenon of political echo chambers on social media. We identify the two components in the phenomenon: the opinion that is shared (‘echo’), and the place that allows its exposure (‘chamber’ — the social network), and examine closely at how these two components interact. We define a production and consumption measure for social-media users, which captures the political leaning of the content shared and received by them. By comparing the two, we find that Twitter users are, to a large degree, exposed to political opinions that agree with their own. We also find that users who try to bridge the echo chambers, by sharing content with diverse leaning, have to pay a ‘price of bipartisanship’ in terms of their network centrality and content appreciation. In addition, we study the role of ‘gatekeepers’, users who consume content with diverse leaning but produce partisan content (with a single-sided leaning), in the formation of echo chambers. Finally, we apply these findings to the task of predicting partisans and gatekeepers from social and content features. While partisan users turn out relatively easy to identify, gatekeepers prove to be more challenging.

This is an interesting paper from a technical perspective, especially their findings on gatekeepers, but political echo chambers in Twitter is hardly surprising. Nor are political echo chambers new.

SourceWatch has a limited (time wise) history of echo chambers and attributes the creation of echo chambers to conservatives:

…conservatives pioneered the “echo chamber” technique,…

Amusing but I would not give conservatives that much credit.

Consider the echo chambers created by the Wall Street Journal (WSJ) versus the Guardian (formerly National Guardian, published in New York City), a Marxist publication, in the 1960’s.

Or the differing content read by pro verus anti-war activists in the same time period. Or racists versus pro-integration advocates. Or pro versus anti Row v. Wade 410 U.S. 113 (more) 93 S. Ct. 705, 35 L. Ed. 2d 147, 1973 U.S. LEXIS 159) supporters.

Echo chambers existed before the examples I have listed but those are sufficient to show echo chambers are not new, despite claims by those who missed secondary education history classes.

The charge of “echo chamber” by SourceWatch, for example, carries with it an assumption that information delivered via an “echo chamber” is false, harmful, etc., versus their information, which leads to the truth, light and the American way. (Substitute whatever false totems you have for “the American way.”)

I don’t doubt the sincerity SourceWatch. I doubt approaching others saying “…you need to crawl out from under your rock so I can enlighten you with the truth” leads to a reduction in echo chambers.

Becoming a gatekeeper, with a foot in two or more echo chambers won’t reduce the number of echo chambers either. But that does have the potential to have gateways between echo chambers.

You’ve tried beating on occupants of other echo chambers with little or no success. Why not try co-occupying their echo chambers for a while?