Editors’ Choice: An Introduction to the Textreuse Package by Lincoln Mullen.

From the post:

A number of problems in digital history/humanities require one to calculate the similarity of documents or to identify how one text borrows from another. To give one example, the Viral Texts project, by Ryan Cordell, David Smith, et al., has been very successful at identifying reprinted articles in American newspapers. Kellen Funk and I have been working on a text reuse problem in nineteenth-century legal history, where we seek to track how codes of civil procedure were borrowed and modified in jurisdictions across the United States.

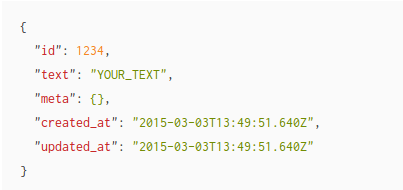

As part of that project, I have recently released the textreuse package for R to CRAN. (Thanks to Noam Ross for giving this package a very thorough open peer review for rOpenSci, to whom I’ve contributed the package.) This package is a general purpose implementation of several algorithms for detecting text reuse, as well as classes and functions for investigating a corpus of texts. Put most simply, full text goes in and measures of similarity come out. (emphasis added)

Kudos to Lincoln on this important contribution to the digital humanities! Not to mention the package will also be useful for researchers who want to compare the “similarity” of texts as “subjects” for purposes of elimination of duplication (called merging in some circles) for presentation to a reader.

I highlighted

Put most simply, full text goes in and measures of similarity come out.

to offer a cautionary tale about the assumption that a high measure of similarity is an indication of the “source” of a text.

Louisiana, my home state, is the only civilian jurisdiction in the United States. Louisiana law, more at one time than now, is based upon Roman law.

Roman law and laws based upon it have a very deep and rich history that I won’t even attempt to summarize.

It is sufficient for present purposes to say the Digest of the Civil Laws now in Force in the Territory of Orleans (online version, English/French) was enacted in 1808.

A scholarly dispute arose (1971-1972) between Professor Batiza (Tulane), who considered the Digest to reflect the French civil code and Professor Pascal (LSU), who argued that despite quoting the French civil code quite liberally, that the redactors intended to codify the Spanish civil law in force at the time of the Louisiana Purchase.

The Batiza vs. Pascal debate was carried out at length and in public:

Batiza, The Louisiana Civil Code of 1808: Its Actual Sources and Present Relevance, 46 TUL. L. REV. 4 (1971); Pascal, Sources of the Digest of 1808: A Reply to Professor Batiza, 46 TUL.L.REV. 603 (1972); Sweeney, Tournament of Scholars over the Sources of the Civil Code of 1808, 46 TUL. L. REV. 585 (1972); Batiza, Sources of the Civil Code of 1808, Facts and Speculation: A Rejoinder, 46 TUL. L. REV. 628 (1972).

I could not find any freely available copies of those articles online. (Don’t encourage paywalls accessing such material. Find it at your local law library.)

There are a couple of secondary articles that discuss the dispute: A.N. Yiannopoulos, The Civil Codes of Louisiana, 1 CIV. L. COMMENT. 1, 1 (2008) at http://www.civil-law.org/v01i01-Yiannopoulos.pdf, and John W. Cairns, The de la Vergne Volume and the Digest of 1808, 24 Tulane European & Civil Law Forum 31 (2009), which are freely available online.

You won’t get the full details from the secondary articles but they do capture some of the flavor of the original dispute. I can report (happily) that over time, Pascal’s position has prevailed. Textual history is more complex than rote counting techniques can capture.

A far more complex case of “text similarity” than Lincoln addresses in the Textreuse package, but once you move beyond freshman/doctoral plagiarism, the “interesting cases” are all complicated.