Beyond Transparency, edited by Brett Goldstein and Lauren Dyson.

From the webpage:

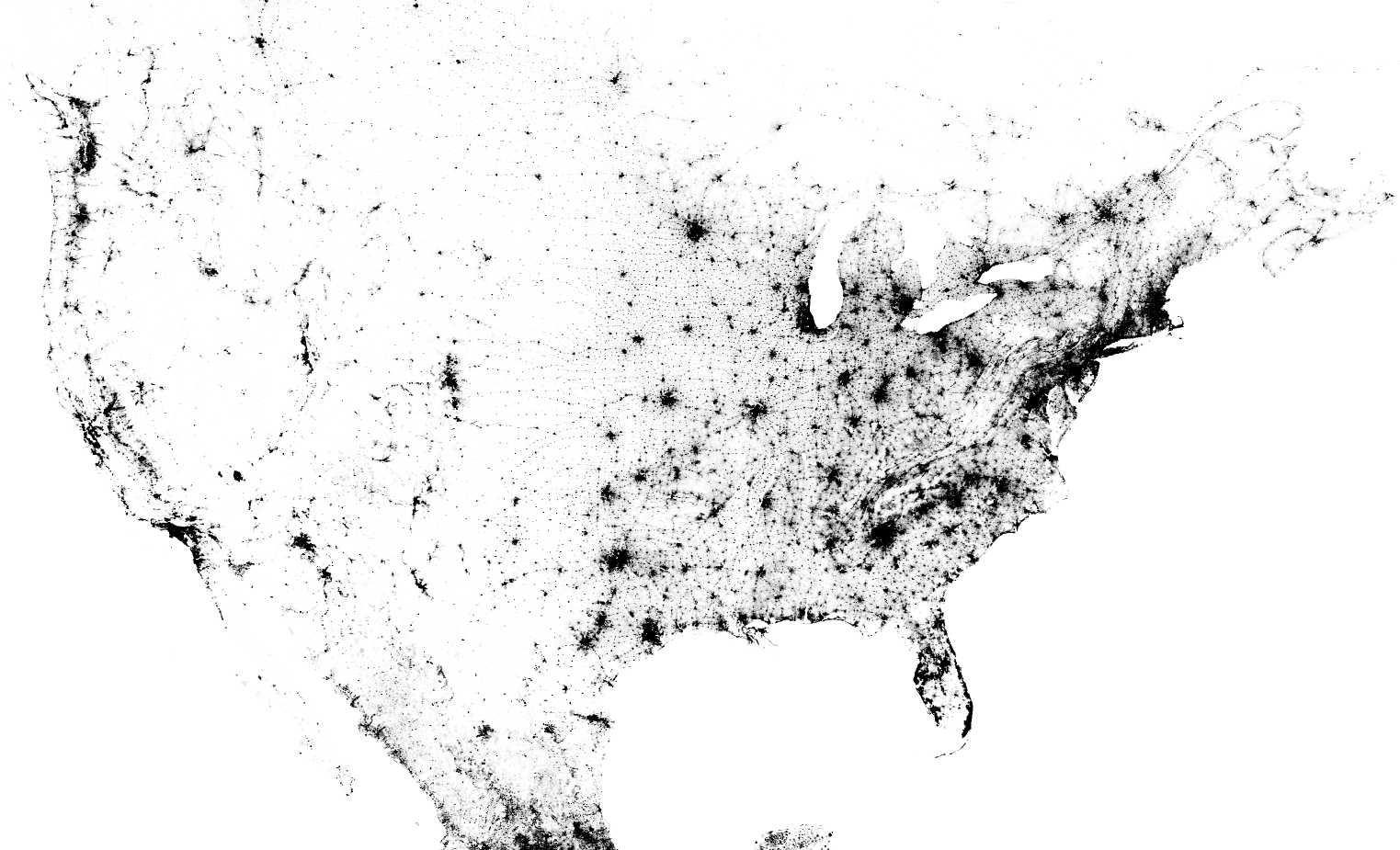

The rise of open data in the public sector has sparked innovation, driven efficiency, and fueled economic development. And in the vein of high-profile federal initiatives like Data.gov and the White House’s Open Government Initiative, more and more local governments are making their foray into the field with Chief Data Officers, open data policies, and open data catalogs.

While still emerging, we are seeing evidence of the transformative potential of open data in shaping the future of our civic life. It’s at the local level that government most directly impacts the lives of residents—providing clean parks, fighting crime, or issuing permits to open a new business. This is where there is the biggest opportunity to use open data to reimagine the relationship between citizens and government.

Beyond Transparency is a cross-disciplinary survey of the open data landscape, in which practitioners share their own stories of what they’ve accomplished with open civic data. It seeks to move beyond the rhetoric of transparency for transparency’s sake and towards action and problem solving. Through these stories, we examine what is needed to build an ecosystem in which open data can become the raw materials to drive more effective decision-making and efficient service delivery, spur economic activity, and empower citizens to take an active role in improving their own communities.

Let me list the titles for two (2) parts out of five (5):

- PART 1 Opening Government Data

- Open Data and Open Discourse at Boston Public Schools Joel Mahoney

- Open Data in Chicago: Game On Brett Goldstein

- Building a Smarter Chicago Dan X O’Neil

- Lessons from the London Datastore Emer Coleman

- Asheville’s Open Data Journey: Pragmatics, Policy, and Participation Jonathan Feldman

- PART 2 Building on Open Data

- From Entrepreneurs to Civic Entrepreneurs, Ryan Alfred, Mike Alfred

- Hacking FOIA: Using FOIA Requests to Drive Government Innovation, Jeffrey D. Rubenstein

- A Journalist’s Take on Open Data. Elliott Ramos

- Oakland and the Search for the Open City, Steve Spiker

- Pioneering Open Data Standards: The GTFS Story, Bibiana McHugh

Steve Spiker captures my concerns about efficacy of “open data” in his opening sentence:

At the center of the Bay Area lies an urban city struggling with the woes of many old, great cities in the USA, particularly those in the rust belt: disinvestment, white flight, struggling schools, high crime, massive foreclosures, political and government corruption, and scandals. (Oakland and the Search for the Open City)

It may well be that I agree with “open data,” in part because I have no real data to share. So any sharing of data is going to benefit me and whatever agenda I want to pursue.

People who are pursuing their own agendas without open data, have nothing to gain by an open playing field and more than a little to lose. Particularly if they are on the corrupt side of public affairs.

All the more reason to pursue open data in my view but with the understanding that every line of data access benefits some and penalizes others.

Take the long standing tradition of not publishing who meets with the President of the United States. Justified on the basis that the President needs open and frank advice from people who feel free to speak openly.

That’s one explanation. Another explanation is being clubby with media moguls would look inconvenient with the U.S. trade delegation be pushing a pro-media position, to the detriment of us all.

When open data is used to take down members of Congress, the White House, heads and staffs of agencies, it will truly have arrived.

Until then, open data is just whistling as it walks past a graveyard in the dark.

I first saw this in a tweet by ladyson.