Pentagon identifies cause of F-35 radar software issue

From the post:

The Pentagon has found the root cause of stability issues with the radar software being tested for the F-35 stealth fighter jet made by Lockheed Martin Corp, U.S. Defense Acquisition Chief Frank Kendall told a congressional hearing on Tuesday.

Last month the Pentagon said the software instability issue meant the sensors had to be restarted once every four hours of flying.

Kendall and Air Force Lieutenant General Christopher Bogdan, the program executive officer for the F-35, told a Senate Armed Service Committee hearing in written testimony that the cause of the problem was the timing of “software messages from the sensors to the main F-35” computer. They added that stability issues had improved to where the sensors only needed to be restarted after more than 10 hours.

“We are cautiously optimistic that these fixes will resolve the current stability problems, but are waiting to see how the software performs in an operational test environment,” the officials said in a written statement.

… (emphasis added)

At $100+ Million plane that requires rebooting every ten hours? I’m not a pilot but that sounds like a real weakness.

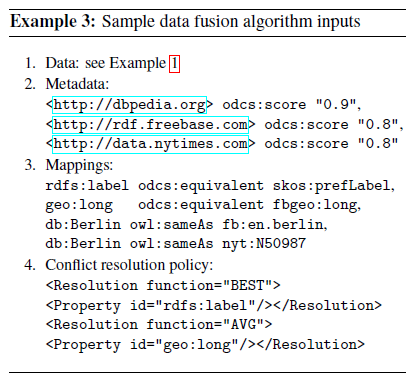

The precise nature of the software glitch isn’t described but you can guess one of the problems from Lockheed Martin’s, Software You Wish You Had: Inside the F-35 Supercomputer:

…

The human brain relies on five senses—sight, smell, taste, touch and hearing—to provide the information it needs to analyze and understand the surrounding environment.

Similarly, the F-35 relies on five types of sensors: Electronic Warfare (EW), Radar, Communication, Navigation and Identification (CNI), Electro-Optical Targeting System (EOTS) and the Distributed Aperture System (DAS). The F-35 “brain”—the process that combines this stellar amount of information into an integrated picture of the environment—is known as sensor fusion.

At any given moment, fusion processes large amounts of data from sensors around the aircraft—plus additional information from datalinks with other in-air F-35s—and combines them into a centralized view of activity in the jet’s environment, displayed to the pilot.

In everyday life, you can imagine how useful this software might be—like going out for a jog in your neighborhood and picking up on real-time information about obstacles that lie ahead, changes in traffic patterns that may affect your route, and whether or not you are likely to pass by a friend near the local park.

F-35 fusion not only combines data, but figures out what additional information is needed and automatically tasks sensors to gather it—without the pilot ever having to ask.

… (emphasis added)

The fusion of data from other in-air F-35s is a classic topic map merging of data problem.

You have one subject, say an anti-aircraft missile site, seen from up to four (in the F-35 specs) F-35s. As is the habit of most physical objects, it has only one geographic location but the fusion computer for the F-35 doesn’t come up with than answer.

Kris Osborn writes in Software Glitch Causes F-35 to Incorrectly Detect Targets in Formation:

…

“When you have two, three or four F-35s looking at the same threat, they don’t all see it exactly the same because of the angles that they are looking at and what their sensors pick up,” Bogdan told reporters Tuesday. “When there is a slight difference in what those four airplanes might be seeing, the fusion model can’t decide if it’s one threat or more than one threat. If two airplanes are looking at the same thing, they see it slightly differently because of the physics of it.”

For example, if a group of F-35s detect a single ground threat such as anti-aircraft weaponry, the sensors on the planes may have trouble distinguishing whether it was an isolated threat or several objects, Bogdan explained.

As a result, F-35 engineers are working with Navy experts and academics from John’s Hopkins Applied Physics Laboratory to adjust the sensitivity of the fusion algorithms for the JSF’s 2B software package so that groups of planes can correctly identify or discern threats.

“What we want to have happen is no matter which airplane is picking up the threat – whatever the angles or the sensors – they correctly identify a single threat and then pass that information to all four airplanes so that all four airplanes are looking at the same threat at the same place,” Bogdan said.

…

Unless Bogdan is using “sensitivity” in a very unusual sense, that doesn’t sound like the issue with the fusion computer of the F-35.

Rather the problem is the fusion computer has no explicit doctrine of subject identity to use when it is merging data from different F-35s, whether it be two, three, four or even more F-35s. The display of tactical information should be seamless to the pilot and without human intervention.

I’m sure members of Congress were impressed with General Bogdan using words like “angles” and “physics,” but the underlying subject identity issue isn’t hard to address.

At issue is the location of a potential target on the ground. Within some pre-defined metric, anything located within a given area is the “same target.”

The Air Force has already paid for this type of analysis and the mathematics of what is called Circular Error Probability (CEP) has been published in Use of Circular Error Probability in Target Detection by William Nelson (1988).

You need to use the “current” location of the detecting aircraft, allowances for inaccuracy in estimating the location of the target, etc., but once you call out the subject identity as an issue, its a matter of making choices of how accurate you want the subject identification to be.

Before you forward this to Gen. Bogdan as a way forward on the fusion computer, realize that CEP is only one aspect of target identification. But, calling the subject identity of targets out explicitly, enables reliable presentation of single/multiple targets to pilots.

Your call, confusing displays or a reliable, useful display.

PS: I assume military subject identity systems would not be running XTM software. Same principles apply even if the syntax is different.

Diamonds, other valuable commodities, foreign deposit arrangements can be had.

Diamonds, other valuable commodities, foreign deposit arrangements can be had.