This is the second high signal-to-noise presentation I have seen this week! I am sure that streak won’t last but I will enjoy it as long as it does.

Resources for after you see the presentation: Hydra: Hypermedia-Driven Web APIs, JSON for Linking Data, and, JSON-LD 1.0.

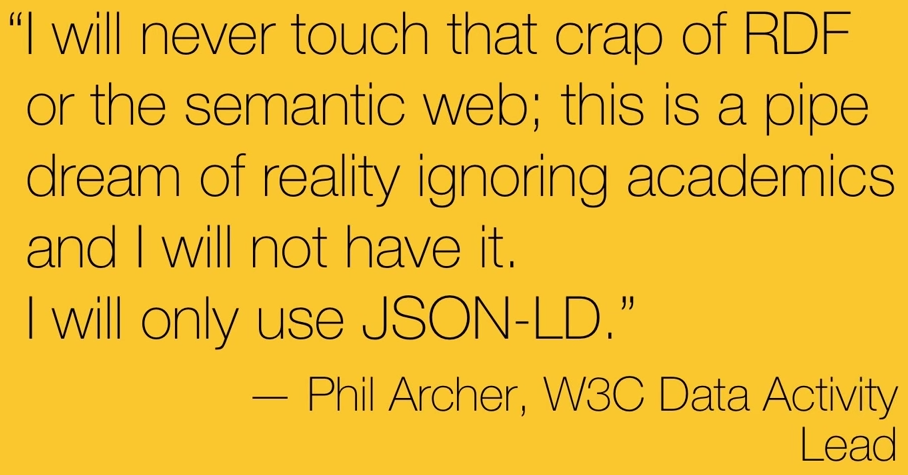

Near the end of the presentation, Marcus quotes Phil Archer, W3C Data Activity Lead:

Which is an odd statement considering that JSON-LD 1.0 Section 7 Data Model, reads in part:

JSON-LD is a serialization format for Linked Data based on JSON. It is therefore important to distinguish between the syntax, which is defined by JSON in [RFC4627], and the data model which is an extension of the RDF data model [RDF11-CONCEPTS]. The precise details of how JSON-LD relates to the RDF data model are given in section 9. Relationship to RDF.

And section 9. Relationship to RDF reads in part:

JSON-LD is a concrete RDF syntax as described in [RDF11-CONCEPTS]. Hence, a JSON-LD document is both an RDF document and a JSON document and correspondingly represents an instance of an RDF data model. However, JSON-LD also extends the RDF data model to optionally allow JSON-LD to serialize Generalized RDF Datasets. The JSON-LD extensions to the RDF data model are:…

Is JSON-LD “…a concrete RDF syntax…” where you can ignore RDF?

Not that I was ever a fan of RDF but standards should be fish or fowl and not attempt to be something in between.

Patrick, you’ve read Manu Sporny’s essay on the origins of the JSON-LD standard, right? If not, I think it helps explain the its apparently contradictory relationship with RDF and the Semantic Web.

http://manu.sporny.org/2014/json-ld-origins-2/

JSON-LD is absolutely an RDF syntax where you can ignore RDF, and I would say this is essential for reaching its target audience of web developers. The goal is to make it as easy as possible for web developers to add semantics to their content, meaning they don’t have to know a single thing about RDF, any of the Semantic Web standards, or supporting technologies in order to use it. They don’t have to make any changes to their JSON documents, either, because JSON context documents can be inserted in HTTP headers.

It’s also more than an RDF syntax. It’s a technology that allows one to create a schema crosswalk between JSON document schemas and RDF models in order to generate RDF triples from a set of JSON documents. My own experience tells me that if you focus on the RDF syntax aspect, and maybe also if you look at it from a semantic modeling background rather than a web developer background, this feature is easy to overlook or misunderstand. I myself did not understand it for several months after I started learning about JSON-LD, because I was initially evaluating it as an RDF serialization format. I don’t think I’m the only one who has had this problem, either, because I’m pretty sure I’ve seen blog posts announcing the release of various OWL ontologies in JSON-LD format rather than example JSON-LD contexts that would demonstrate how to use those ontologies with existing JSON documents. I think this misunderstanding is unfortunate because I’m pretty sure this is the core feature of the standard — being something in between JSON and RDF is the whole point.

Comment by marijane — October 16, 2014 @ 2:25 am

marijane: Apologies for the slow response! Carol broke her left arm and shattered her wrist at work so I have taken over all the two handed tasks at home. Another two weeks before we find out what follows 5 weeks in a cast.

Thanks for the pointer to Manu Sporny’s essay on the origins of JSON-LD! At least a 9.2 on the rant scale. But he is also gifted at explanation as I viewed his intro video on JSON-LD.

Let’s assume that OWL ontologies begin to appear as example JSON-LD contexts so web developers can ignore the semantics and learn the JSON-LD by rote. That is very likely to be successful, for the same reason

But, when semantics are disconnected from syntax that is alleged to represent them, how useful are the resulting semantics? I ask because I don’t think we know the answer to that question. I recently posted about the LSD-Dimensions projects which warns that the semantics you find may not be the semantics you are expecting. Not surprising but how much that will be the case with JSON-LD remains unknown.

I suspect that like “sameAs,” the semantics of JSON-LD are going to vary from web developer to web developer with communities developing non-uniform practices with regard to those semantics. Will making these semantic markers web accessible make them easier to resolve? You will still have to consult the original data for each such determination.

Nothing against JSON-LD, it may be the simple syntax that is needed to enable fast annotation of HTML documents which are then converted into the fuller semantics as represented by a topic map. And then matched back against a document to ask the author if this is what they intended? Hard to say.

Comment by Patrick Durusau — October 21, 2014 @ 11:42 am

Apologies from me as well, for my slow response. Five weeks in a cast, yuck! Please send Carol my best wishes for a speedy recovery.

You ask, “But, when semantics are disconnected from syntax that is alleged to represent them, how useful are the resulting semantics?” I have wondered about that as well, especially because I’ve seen a lot of JSON-LD contexts borrowing concepts from different vocabularies that may not use the same semantics between them, and it’s not clear to me what you do with your data if you choose to represent it that way.

But then I see how Google is facilitating use of JSON-LD, such as…

https://developers.google.com/schemas/

https://developers.google.com/webmasters/richsnippets/

https://developers.google.com/gmail/actions/

or when I see stuff like this…

https://support.google.com/webmasters/answer/4620709

https://support.google.com/webmasters/answer/4620133

… and I think a bunch of things in response, like:

– maybe the question to ask isn’t “how useful are the resulting semantics?” but “who are the resulting semantics useful to?”

– or maybe “what can you do with the resulting semantics?”

– a critical mass of uniform practices seems to be coalescing around schema.org

– JSON-LD seems to be instrumental in the development this critical mass, which makes sense given the popularity of JSON

– I have a feeling developers are going to use whatever semantics Google tells them to

– the semantic markup authors are apply to their content is really an API

– the API creator’s intent is more important than the author’s intent, because it’s up to the author to make sure they’re understood by the API

I’m not sure if this is quite what folks originally had in mind for the semantic web, and I’m not sure if it would be possible without organizations the size of Google, Yahoo, and Microsoft driving it, but it seems to be heading off in a useful direction.

Comment by marijane — October 29, 2014 @ 12:53 am

We will know more early next week about Carol’s arm. Either another cast or starting a long road of physical therapy. Thanks for the best wishes!

I think your question: “who are the resulting semantics useful to?” captures the notion of a range of semantic precision that may be why JSON-LD succeeds (by some definition of success).

Within a domain, group, etc., some words have precise enough semantics that members use them without fear of being misunderstood.

But we know as the range of information that members of any domain can search increases, it reaches a point where words may or may not mean what you think they mean.

While JSON-LD leaves a lot of semantics on the table, perhaps the effort required to use it will narrow the range of usage of terms so specified to an acceptable range of ambiguity. The semantics may not be precise, but they are good enough to keep subjects within broad classes.

That would certainly improve current search results.

No, the original semantic web was to be composed of “reasoning” (sic) agents that could process logical statements and reach deterministic results from content on the WWW.

I like the Google strategy because it relies upon the natural incentive authors have to get their content noticed. A payoff that doesn’t have to wait for everyone to encode their data as well.

Comment by Patrick Durusau — October 29, 2014 @ 9:42 am