Investigating news reports of Twitter enabling muting of words and hashtags lead me to Advanced muting options on Twitter. Also relevant is Muting accounts on Twitter.

Alex Hern‘s post: Twitter users to get ability to mute words and conversations prompted this search because I found:

After nine years, Twitter users will finally be able to mute specific conversations on the site, as well as filter out all tweets with a particular word or phrase from their notifications.

The much requested features are being rolled out today, according to the company. Muting conversations serves two obvious purposes: users who have a tweet go viral will no longer have to deal with thousands of replies from strangers, while users stuck in an interminable conversation between people they don’t know will be able to silently drop out of the discussion.

A broader mute filter serves some clear general uses as well. Users will now be able to mute the names of popular TV shows, for instance, or the teams playing in a match they intend to watch later in the day, from showing up in their notifications, although the mute will not affect a user’s main timeline. “This is a feature we’ve heard many of you ask for, and we’re going to keep listening to make it better and more comprehensive over time,” says Twitter in a blogpost.

…

to be too vague to be useful.

Starting with Advanced muting options on Twitter, you don’t have to read far to find:

Note: Muting words and hashtags only applies to your notifications. You will still see these Tweets in your timeline and via search. The muted words and hashtags are applied to replies and mentions, including all interactions on those replies and mentions: likes, Retweets, additional replies, and Quote Tweets.

That’s the second paragraph and displayed with a high-lighted background.

So, “muting” of words and hashtags only stops notifications.

“Muted” offensive or inappropriate content is still visible “in your timeline and search.”

Perhaps really muting based on words and hashtags will be a paid subscription feature?

The other curious aspect is that “muting” an account carries an entirely different meaning.

The first sentence in Muting accounts on Twitter reads:

Mute is a feature that allows you to remove an account’s Tweets from your timeline without unfollowing or blocking that account.

Quick Summary:

- Mute account – Tweets don’t appear in your timeline.

- Mute by word or hashtag – Tweets do appear in your timeline.

How lame is that?

Solution That Avoids Censorship

The solution to Twitter’s “hate speech,” which means different things to different people isn’t hard to imagine:

- Mute by account, word, hashtag or regex – Tweets don’t appear in your timeline.

- Mute lists can be shared and/or followed by others.

Which means that if I trust N’s judgment on “hate speech,” I can follow their mute list. That saves me the effort of constructing my own mute list and perhaps even encourages the construction of public mute lists.

Twitter has the technical capability to produce such a solution in short order so you have to wonder why they haven’t? I have no delusion of being the first person to have imagined such a solution. Twitter? Comments?

The Alternative Solution – Roving Citizen-Censors

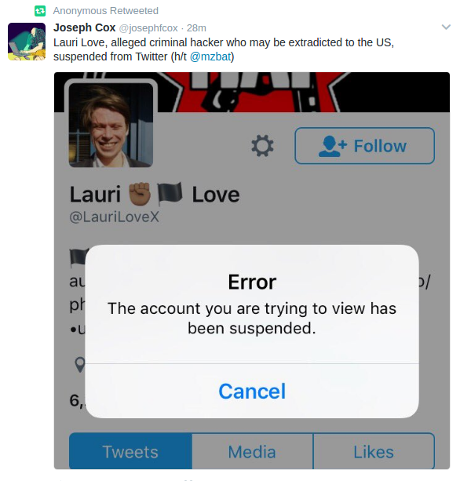

The alternative to a clean and non-censoring solution is covered in the USA Today report Twitter suspends alt-right accounts:

Twitter suspended a number of accounts associated with the alt-right movement, the same day the social media service said it would crack down on hate speech.

Among those suspended was Richard Spencer, who runs an alt-right think tank and had a verified account on Twitter.

The alt-right, a loosely organized group that espouses white nationalism, emerged as a counterpoint to mainstream conservatism and has flourished online. Spencer has said he wants blacks, Asians, Hispanics and Jews removed from the U.S.

…

[I personally find Richard Spencer’s views abhorrent and report them here only by way of example.]

From the report, Twitter didn’t go gunning for Richard Spencer’s account but the Southern Poverty Law Center (SPLC) did.

The SPLC didn’t follow more than 100 white supermacists to counter their outlandish claims or to offer a counter-narrative. They followed to gather evidence of alleged violations of Twitter’s terms of service and to request removal of those accounts.

Government censorship of free speech is bad enough, enabling roving bands of self-righteous citizen-censors to do the same is even worse.

The counter-claim that Twitter isn’t the government, it’s not censorship, etc., is intellectually and morally dishonest. Technically true in U.S. constitutional law sense but suppression of speech is the goal and that’s censorship, whatever fig leaf the SPLC wants to put on it. They should be honest enough to claim and defend the right to censor the speech of others.

I would not vote in their favor, that is to say they have a right to censor the speech of others. They are free to block speech they don’t care to hear, which is what my solution to “hate speech” on Twitter enables.

Support muting, not censorship or roving bands of citizen-censors.